introduction

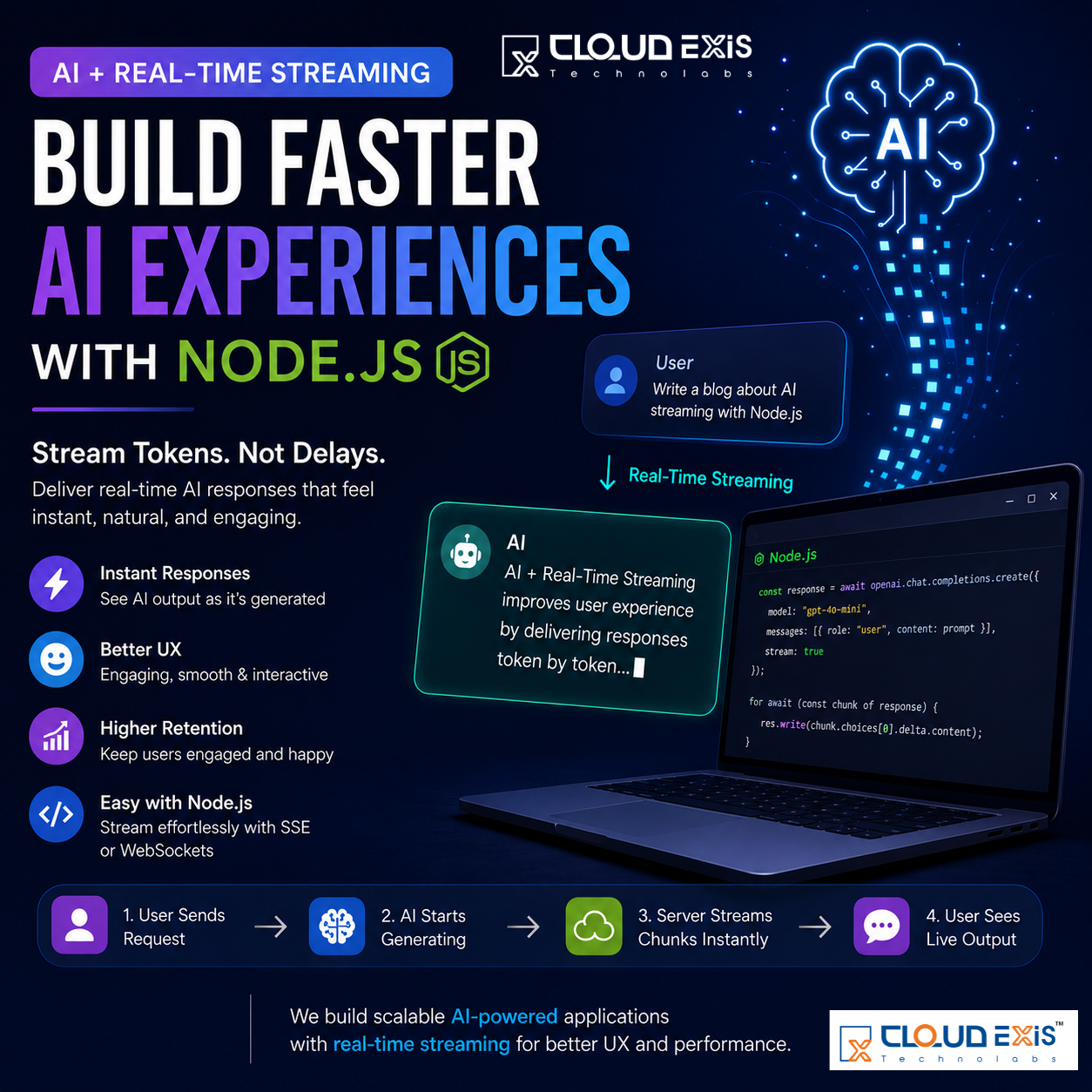

Artificial Intelligence has completely transformed how users interact with modern applications. From AI-powered chatbots and virtual assistants to content generators and coding copilots, users now expect instant responses and smooth experiences. But there’s one critical factor that separates average AI products from exceptional ones in 2026:

Real-Time Streaming.

Think about platforms like OpenAI or advanced AI assistants. The response doesn’t suddenly appear after a long delay. Instead, the text starts generating immediately, word by word, creating a natural and engaging interaction.

That seamless experience is powered by streaming architecture, and it has become one of the most important UX improvements in AI software development.

At Cloudexis Technolabs LLP, we help businesses build scalable AI-powered web and mobile applications using technologies like Node.js, Laravel, React, Flutter, and modern AI APIs. One major shift we are implementing in AI applications today is moving from traditional request-response systems to real-time streaming experiences.

In this blog, we’ll explore how AI streaming works, why it matters, and how Node.js makes it incredibly powerful for modern AI products.

The Traditional AI Response Problem

For years, web applications followed a simple pattern:

- User sends a request

- Server processes the request

- Server returns the complete response

This worked well for standard APIs and traditional web applications. But AI applications behave differently.

Large Language Models (LLMs) such as GPT-based systems generate responses token by token. A long AI-generated response may take several seconds to complete.

The problem?

Modern users hate waiting.

Even a 5–10 second delay can feel frustrating, especially in AI-powered applications where users expect conversational speed and real-time interaction.

Traditional response systems create several UX problems:

- Long loading states

- Poor perceived performance

- Increased user drop-off

- Higher abandonment rates

- Less engaging interactions

In 2026, users expect AI products to feel alive, responsive, and interactive.

That’s where streaming changes everything.

What Is Real-Time Streaming in AI?

Real-time streaming means sending generated content to the user instantly while the AI is still processing.

Instead of waiting for the complete response, the backend continuously pushes chunks of generated text to the frontend in real time.

The flow becomes:

- User sends a prompt

- AI starts generating tokens

- Server streams chunks immediately

- Frontend displays live output

This creates the familiar “typing effect” users see in modern AI products.

The experience feels faster because users receive feedback instantly instead of staring at a blank screen.

Why Streaming Improves User Experience

Streaming is not just a technical enhancement.

It’s a psychological UX improvement.

Users feel more comfortable when they see progress happening immediately.

Even if the total generation time remains the same, streaming dramatically improves perceived speed.

Key UX Benefits of AI Streaming

Faster Perceived Performance

Users instantly see generated content instead of waiting for the full response.

Improved Engagement

Real-time typing effects make AI interactions feel natural and conversational.

Better User Retention

Users are less likely to close tabs or abandon requests.

Professional Product Experience

Streaming creates a premium AI application feel similar to leading AI platforms.

Real-Time Feedback

Users know the AI is actively working.

Why Node.js Is Perfect for AI Streaming

When it comes to building real-time AI systems, Node.js is one of the best backend technologies available.

Node.js is event-driven, asynchronous, and lightweight, making it ideal for handling continuous streaming connections.

Unlike traditional synchronous architectures, Node.js can efficiently manage multiple real-time streams simultaneously.

That’s especially important for:

- AI chat applications

- AI assistants

- Live content generation

- AI coding tools

- Voice AI systems

- Real-time analytics platforms

How AI Streaming Works in Node.js

Modern AI providers like:

support streaming responses using simple API configurations.

One commonly used parameter is:

stream: true

This tells the AI provider to return generated tokens progressively instead of sending one large response.

In Node.js, developers can process those chunks in real time using asynchronous iteration.

Example:

for await (const chunk of response) {

res.write(chunk.choices[0].delta.content);

}

This continuously pushes generated text to the frontend as soon as it becomes available.

Popular Streaming Methods in AI Applications

There are multiple ways to stream AI responses in modern applications.

1. Server-Sent Events (SSE)

SSE is one of the most common approaches for AI chat systems.

Advantages

- Simple implementation

- Lightweight

- Perfect for one-way streaming

- Excellent browser support

Best For

- AI chatbots

- Content generators

- Live AI responses

2. WebSockets

WebSockets provide bidirectional communication between client and server.

Advantages

- Real-time communication

- Two-way interaction

- Lower latency

Best For

- AI collaboration tools

- Multiplayer AI systems

- Live AI coding assistants

- Voice-based AI platforms

3. HTTP Chunked Transfer

Another approach involves chunked HTTP responses where the server continuously sends partial data.

Advantages

- Easy implementation

- No persistent socket required

Best For

- Lightweight streaming systems

- Simple AI integrations

Real-World AI Applications Using Streaming

Today, almost every AI product relies on streaming architecture.

AI Chatbots

Streaming creates natural conversation experiences.

Instead of waiting for a complete answer, users see responses appearing instantly.

AI Content Generators

Blog writing tools, marketing generators, and SEO content systems use streaming to improve workflow speed.

AI Coding Assistants

AI coding platforms stream generated code line by line for better developer productivity.

AI Healthcare Systems

Real-time AI-powered healthcare platforms provide instant conversational guidance and booking assistance.

AI Education Platforms

Educational AI systems stream explanations interactively, improving learning engagement.

At Cloudexis Technolabs LLP, we are actively building AI-powered platforms with real-time streaming capabilities for healthcare, education, analytics, CRM systems, and AI automation solutions.

Technical Advantages of Streaming Architecture

Streaming is not only beneficial for users.

It also improves backend efficiency and application scalability.

Reduced Perceived Latency

Users begin consuming content immediately.

Better Resource Utilization

Streaming avoids holding large payloads in memory before sending responses.

Scalable AI Systems

Node.js handles asynchronous streams efficiently.

Improved Frontend Responsiveness

Frontend applications can progressively render incoming data.

Enhanced Mobile Experiences

Streaming reduces long wait times on mobile networks.

Best Practices for AI Streaming in Node.js

If you’re building AI applications with Node.js, these best practices can significantly improve your architecture.

Use Async Processing

Leverage asynchronous handling to avoid blocking operations.

Handle Partial Responses Gracefully

Frontend systems should properly render streamed chunks.

Add Loading Indicators

Even with streaming, users should see clear progress indicators.

Optimize Token Usage

Efficient prompt engineering reduces unnecessary token generation.

Monitor Stream Errors

Always handle broken connections or interrupted streams.

Use Scalable Infrastructure

AI streaming applications require scalable cloud deployment strategies.

Frontend Technologies for Streaming AI Apps

Streaming works even better when combined with modern frontend frameworks.

Popular frontend stacks include:

- React

- Next.js

- Vue.js

- Flutter

These frameworks can progressively display streamed content while maintaining smooth UI interactions.

The Future of AI Applications in 2026

AI is no longer an optional feature.

It has become a core component of modern digital products.

But users now expect more than intelligence.

They expect:

- Instant feedback

- Real-time interactions

- Human-like responsiveness

- Conversational UX

- Seamless performance

The companies building successful AI products in 2026 are focusing heavily on optimizing user experience.

Streaming is now considered a standard requirement for high-quality AI applications.

Without streaming, AI systems feel outdated.

Why Businesses Should Invest in Streaming AI Solutions

Businesses building AI-powered platforms should prioritize streaming architecture from the beginning.

Benefits include:

- Better customer engagement

- Higher conversion rates

- Improved retention

- Stronger user satisfaction

- Faster perceived performance

- Competitive advantage

Whether it’s an AI SaaS platform, chatbot, healthcare solution, educational system, or enterprise automation platform, streaming creates a significantly better experience.

How Cloudexis Technolabs LLP Helps Businesses Build AI Platforms

At Cloudexis Technolabs LLP, we specialize in building scalable AI-powered applications with modern real-time technologies.

Our expertise includes:

- AI Chatbot Development

- Node.js AI Streaming Architecture

- AI SaaS Platforms

- AI Healthcare Systems

- AI Education Platforms

- AI Automation Solutions

- Real-Time Dashboard Systems

- Web & Mobile App Development

- Laravel & React Development

- Flutter Mobile Applications

We help startups, enterprises, and B2B clients develop high-performance AI products with fast, scalable, and user-friendly experiences.

Our focus is not only on AI accuracy but also on delivering premium UX through modern technologies like real-time streaming.

How Cloudexis Technolabs LLP Helps Businesses Build AI Platforms

At Cloudexis Technolabs LLP, we specialize in building scalable AI-powered applications with modern real-time technologies.

Our expertise includes:

- AI Chatbot Development

- Node.js AI Streaming Architecture

- AI SaaS Platforms

- AI Healthcare Systems

- AI Education Platforms

- AI Automation Solutions

- Real-Time Dashboard Systems

- Web & Mobile App Development

- Laravel & React Development

- Flutter Mobile Applications

We help startups, enterprises, and B2B clients develop high-performance AI products with fast, scalable, and user-friendly experiences.

Our focus is not only on AI accuracy but also on delivering premium UX through modern technologies like real-time streaming.

FAQS

1. What is AI real-time streaming in Node.js?

AI real-time streaming is a technique where AI-generated responses are sent to users instantly, token by token, instead of waiting for the complete response. In Node.js, this is commonly implemented using Server-Sent Events (SSE) or WebSockets for faster and smoother AI interactions.

2. Why is streaming important for AI applications?

Streaming improves user experience by reducing perceived waiting time. Users can see AI responses appearing live, which makes applications feel faster, more interactive, and more responsive compared to traditional request-response systems.

3. Which technologies are commonly used for AI streaming?

Popular technologies for AI streaming include:

- Node.js

- Server-Sent Events (SSE)

- WebSockets

- OpenAI Streaming APIs

- Anthropic APIs

- React.js and Next.js for frontend rendering

These technologies help create scalable and real-time AI-powered applications.

4. What types of applications benefit from AI streaming?

AI streaming is highly useful for:

- AI chatbots

- AI content generators

- AI coding assistants

- Healthcare AI platforms

- AI education systems

- Customer support automation

- Real-time analytics dashboards

Any application requiring live AI interaction can benefit from streaming architecture.

5. Why is Node.js considered ideal for AI streaming applications?

Node.js is event-driven and asynchronous, making it highly efficient for handling multiple real-time streaming connections simultaneously. Its lightweight architecture allows developers to build scalable, high-performance AI applications with smooth live-response experiences.